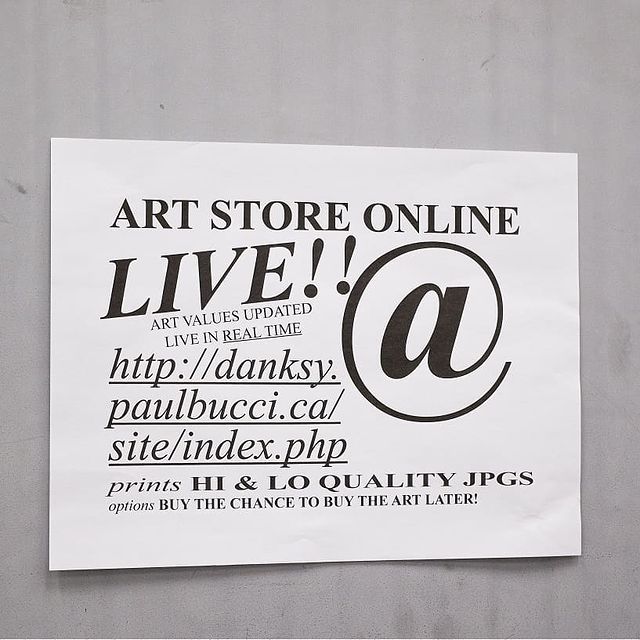

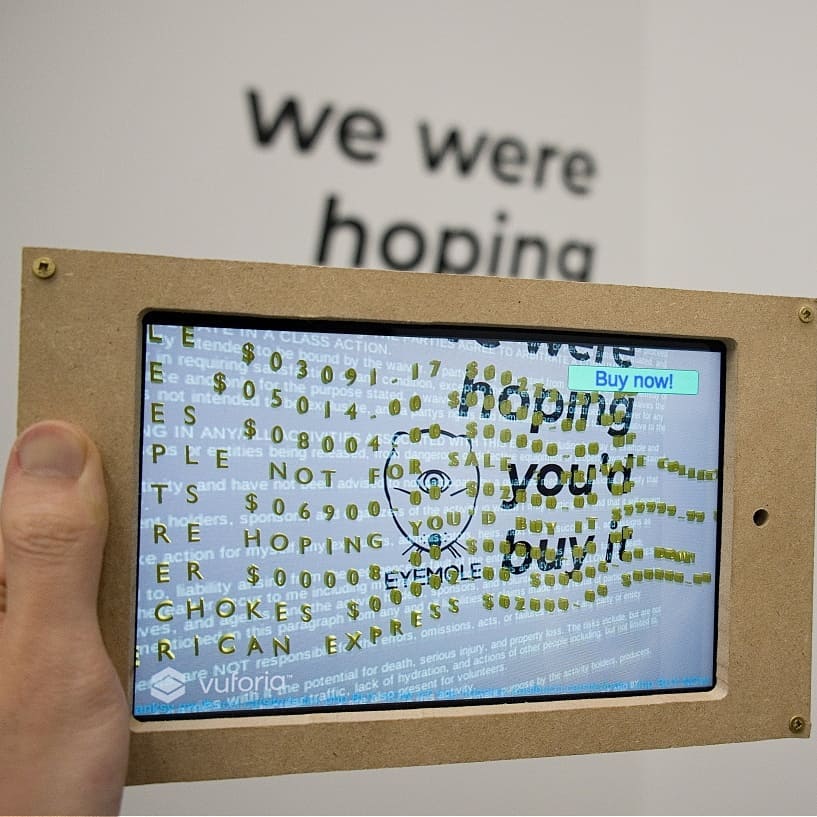

Eyemole was an interactive arts cooperative that engaged in corporate roleplay. For one show, we bought a set of cheap paintings and created augmented reality overlays as the hidden digital artwork. The artworks were hooked into an e-commerce platform that updated the artwork’s exchange value based on different algorithms for each piece (duration of viewing time, number of purchases, etc.). As part of the performance, we negotiated and signed legal agreements for the intellectual property rights for each piece.

Author: Paul Bucci

-

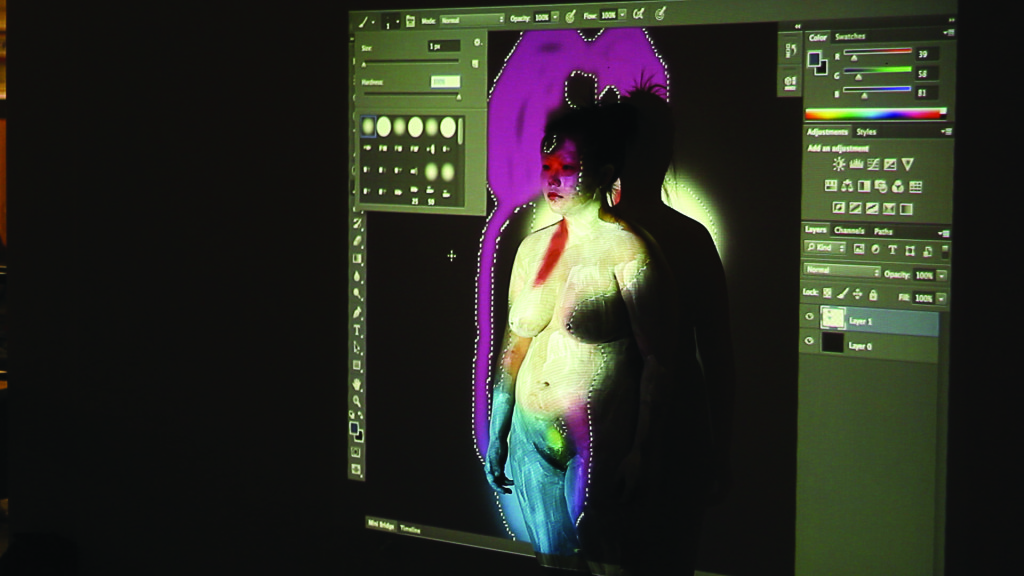

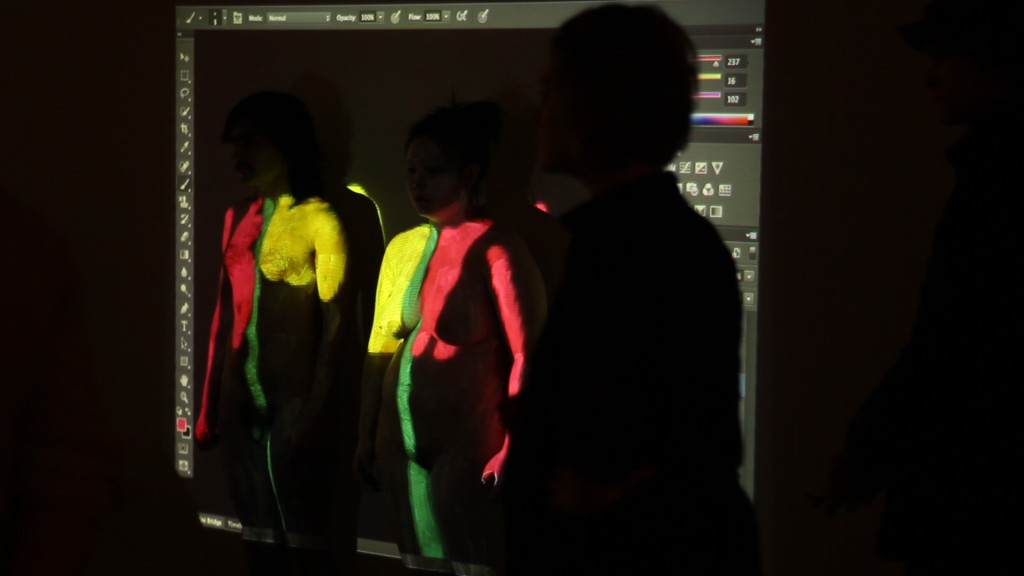

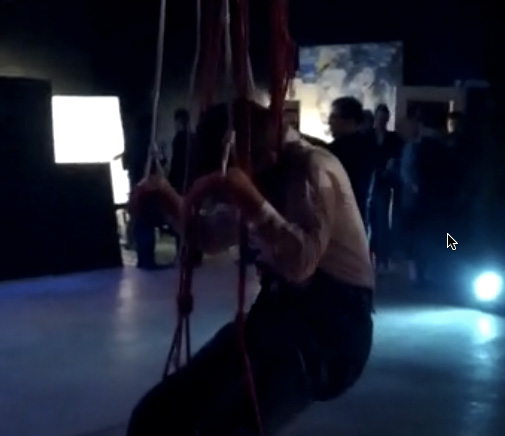

Performance: manipulated bodies

In collaboration with artist Kathy Yan Li, we created a series of performances that dealt with digital and physical body manipulations. In two performances, I painted Kathy with white paint and invited audience members to Photoshop her live.

In once performance, an audience member felt it was unfair to have only Kathy painted, and wanted me to paint him as well, so I did.

In a second series, Kathy hung my body from the ceiling and invited audience members to puppet my limbs.

-

How should we measure emotion?

In a world of high-tech sensors and machine learning, it seems like we can build a machine to detect anything. But if people have a hard time figuring out how they feel, how can we build machines that detect emotion?

Related academic publications

> P. H. Bucci, X. L. Cang, H. Mah, L. Rodgers, and K. E. MacLean, “Real emotions don’t stand still: Toward ecologically viable representation of affective interaction,” in 2019 8th International Conference on Affective Computing and Intelligent Interaction (ACII), IEEE, 2019, pp. 1–7.

> P. H. Bucci, D. Marino, I. Beschastnikh. Affective robots need therapy. ACM Transactions on Human-Robot Interaction. 2023 Mar 15;12(2):1-22.

-

Complex stories about simple robots

Given what we found when we asked voice actors to puppet our robots, we wanted to test how the stories people told about our robots impacted their perceptions of the robot’s emotions. Turns out, it was a lot.

Related academic publications

> P. Bucci, L. Zhang, X. L. Cang, and K. E. MacLean, “Is it happy? behavioural and narrative frame complexity impact perceptions of a simple furry robot’s emotions,” in Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems, 2018, pp. 1–11.

-

Voodle: translating sound to movement

Designing naturalistic robot motion is tough. We found that using our voices to make a robot move gave us some delightful expressions. But when we tested the system with voice actors, we were surprised to see how engaged and emotional they were.

Related academic publications

> P. Bucci, X. L. Cang, A. Valair, D. Marino, L. Tseng, M. Jung, J. Rantala, O. S. Schneider, and K. E. MacLean, “Sketching cuddlebits: Coupled prototyping of body and behaviour for an affective robot pet,” in Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, ACM, 2017, pp. 3681–3692.

> D. Marino, P. Bucci, O. S. Schneider, and K. E. MacLean, “Voodle: Vocal doodling to sketch affective robot motion,” in Proceedings of the 2017 Conference on Designing Interactive Systems, ACM, 2017, pp. 753–765.

-

Detecting gestures on a fabric touch sensor

Developing furry robots that can detect and respond to emotional touch requires soft touch sensors and gesture detection algorithms. We developed a fabric touch sensor and a system that could detect different touches—like pats, scratches, and tickles.

Related academic publications

>X. L. Cang, P. Bucci, J. Rantala and K. E. MacLean, “Discerning Affect From Touch and Gaze During Interaction With a Robot Pet,” in IEEE Transactions on Affective Computing, vol. 14, no. 2, pp. 1598-1612, 1 April-June 2023, doi: 10.1109/TAFFC.2021.3094894

> Cang, X. L., Bucci, P., Strang, Allen, J., MacLean, K. E., and Liu, H. Y. S., “Different Strokes and Different Folks: Economical Dynamic Surface Sensing and Affect-Related Touch Recognition.” In Proceedings of the 2015 ACM on International Conference on Multimodal Interaction (ICMI ’15). ACM, Seattle, WA, USA, Nov 2015, pp. 147-154.

-

CuddleBits: Small furry robots

The CuddleBits are handheld furry robots that express emotions through breathing movements. We wanted to make a lot of different sizes and shapes, so I created two design systems that could be easily modified (full instructions here).

One of the CuddleBits breathing calmly. They are cheap, simple tools to study emotional touch.

We wrapped them in our fabric touch sensor and placed them in many different emotional situtations. We asked people to tell them emotional stories (like a talk therapy session). We asked voice actors to puppet them.

The project started off as a way to build small, cheaper versions of the Haptic Creature and CuddleBot. It was a design challenge: I was asked to make a pocket-sized version that used only one motor and still expressed a wide range of emotion. I went through an extensive low fidelity prototyping process, starting with cardboard, mechano, and duotang binders.

Related academic publications

> Witkower, Z., Cang, L., Bucci, P., MacLean, K., & Tracy, J. L. (2025). Human psychophysiology is influenced by physical touch with a “breathing” robot. Emotion. Advance online publication. https://doi.org/10.1037/emo0001601

> P. Bucci, L. Zhang, X. L. Cang, and K. E. MacLean, “Is it happy? behavioural and narrative frame complexity impact perceptions of a simple furry robot’s emotions,” in Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems, 2018, pp. 1–11.

> L. H. Zhang, P. Bucci, X. L. Cang, and K. MacLean, “Infusing CuddleBits with Emotion: Build your own and tell us about it,” in Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems, 2018, pp. 1–4.

> P. Bucci, “Building believable robots: An exploration of how to make simple robots look, move, and feel right,” Master’s Thesis, University of British Columbia, 2017.

> P. Bucci, X. L. Cang, A. Valair, D. Marino, L. Tseng, M. Jung, J. Rantala, O. S. Schneider, and K. E. MacLean, “Sketching cuddlebits: Coupled prototyping of body and behaviour for an affective robot pet,” in Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, ACM, 2017, pp. 3681–3692.

> D. Marino, P. Bucci, O. S. Schneider, and K. E. MacLean, “Voodle: Vocal doodling to sketch affective robot motion,” in Proceedings of the 2017 Conference on Designing Interactive Systems, ACM, 2017, pp. 753–765.

> P. Bucci, L. Cang, M. Chun, D. Marino, O. Schneider, H. Seifi, and K. MacLean, “Cuddlebits: An iterative prototyping platform for complex haptic display,” Eurohaptics Demo Session, 2016.

> L. Cang, P. Bucci, and K. E. MacLean, “Cuddlebits: Friendly, low-cost furballs that respond to touch,” in Proceedings of the 2015 ACM on International Conference on Multimodal Interaction, ACM, 2015, pp. 365–366.

-

Reddit’s Am I the Asshole?

Reddit’s Am I the Asshole advice forum is a rich source of information on what people consider to be moral and good in our culture. I am using natural language processing (NLP) methods to support a deep read of social norms around device privacy and interpersonal trust.